Speech and music

Speech and music are fundamental to society, being human and health and well-being. Our research improves understanding of these vital sounds including how they are made; how they can be manipulated using machine learning, and how people respond to them. They are also integral to Architectural Acoustics and our work on Aural Diversity.

Sound accessibility

A strong research focus is improving accessibility of speech and music for people with hearing difference.

- 12 million adults in the UK are deaf, have hearing loss or tinnitus.

- Expected to be 14 million by 2035 due to aging population.

Two large current EPSRC projects are:

- Clarity

- A significant challenge for hearing aid users is hearing speech in noise situations. Clarity is therefore running a series of machine learning challenges to improve the processing of speech-in-noise for hearing aids. As well as being a catalyst for improved speech enhancement for those with hearing loss, the challenges are developing new objective methods for measuring speech intelligibility.

- Cadenza

- Is improving music mixing and processing for those with a hearing loss. The methods might be applied to hearing aids or consumer devices. Alongside machine learning challenges, Cadenza is working to improve understanding of what people with hearing loss want from music.

Audio Engineering

Digital signal processing, spatial audio and the perception of audio form an important part of our research. The University’s presence at MediaCityUK alongside the BBC is an important driver for research. The research centre is a lead partner in the BBC’s Audio Research Partnership. We are also a test house and carry out commercial R&D for audio engineering companies.

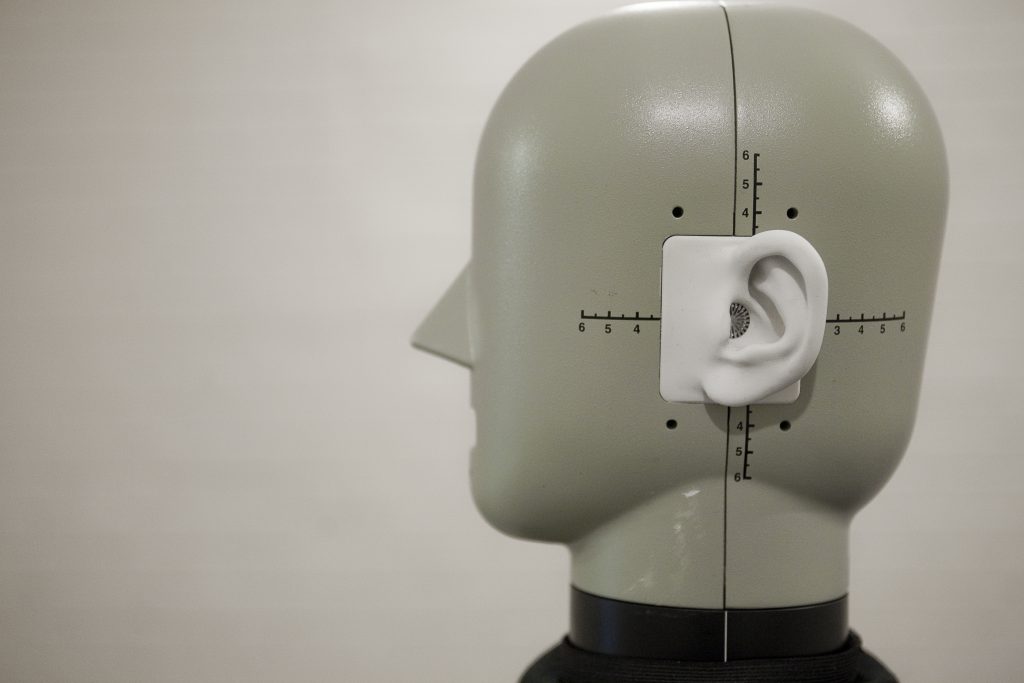

Spatial Audio

We have many different spatial audio systems including wavefield synthesis, ambisonics and binaural. These used on research projects with the BBC to investigate the future of spatial audio, such as adding height to surround sound systems and what happens when listeners aren’t in the sweet spot.

The future of broadcast audio was investigated in the EPSRC Programme Grant, S3A: Future Spatial Audio for an Immersive Listener Experience at Home. 3D sound can offer listeners the experience of being there at a live event, such as the Proms, but currently requires highly controlled listening spaces and loudspeaker setups. The goal of S3A was to realise practical 3D audio.

One outcome of the grant was Media Device Orchestration, where ad-hoc arrays of laptops and mobiles are used to create a surround sound array. Another outcome was investigating the use of Object Based Audio (OBA) to improve accessibility, including trailing new technologies with BBC Casuality.

Archive of selected projects

Broadcast audio

The FascinatE project developed a system to allow end-users to interactively view and navigate around an ultra-high resolution video panorama showing a live event, with the accompanying audio automatically changing to match the selected view. The output is adapted to their particular kind of device, covering anything from a mobile handset to an immersive panoramic display. At the production side, this required the development of new audio and video capture systems, and scripting systems to control the shot framing options presented to the viewer. Intelligent networks with processing components repurpose the content to suit different device types and framing selections, and user terminals supporting innovative interaction methods allow viewers to control and display the content.

This work led to our spin-out company SalsaSound, which provides AI-based audio mixing solutions for content creators to improve their storytelling with more engaging, cinematic and higher quality audio for live sports.

Audio signal processing

- Measurement of transmission channels using naturalistic signals

- Active room acoustic metadiffusers [1,2,3]

- Improving TV sound for hearing impaired people

- Active shielding – an innovation based on the differential potential method

- The Good Recording Project – blind signal processing to evaluate audio quality

Psychoacoustics

- Neural models of sound localisation

- Assessment of components and codecs for music reproduction.

- Assessing the quality of low frequency audio reproduction in critical listening spaces

- The Good Recording Project – perception of audio quality with user generated content

- Measuring the moods of music from BBC theme tunes

- Making sense of sound. Salford led the work on the perception of everyday sounds.

Contact

Please contact Ben Shirley at b.g.shirley@salford.ac.uk.